In my previous posts, I explored the practical applications of AI, from developing a Voice-Enabled AI Coach to automating the generation of Cash Flow statements. Continuing this journey into applied artificial intelligence, my latest project explores the recently launched OpenAI Agent Builder. I chose to tackle another common use case: the high-volume, manual process of sourcing and vetting information from public domains.

I developed a custom AI agent designed to autonomously find, evaluate, and track high-caliber professional roles from online platforms. This use case demonstrates a powerful model that could easily be adapted for talent sourcing, competitive analysis, or lead generation. This case study outlines the agent’s architecture and illustrates the strategic value of building bespoke AI solutions to drive efficiency.

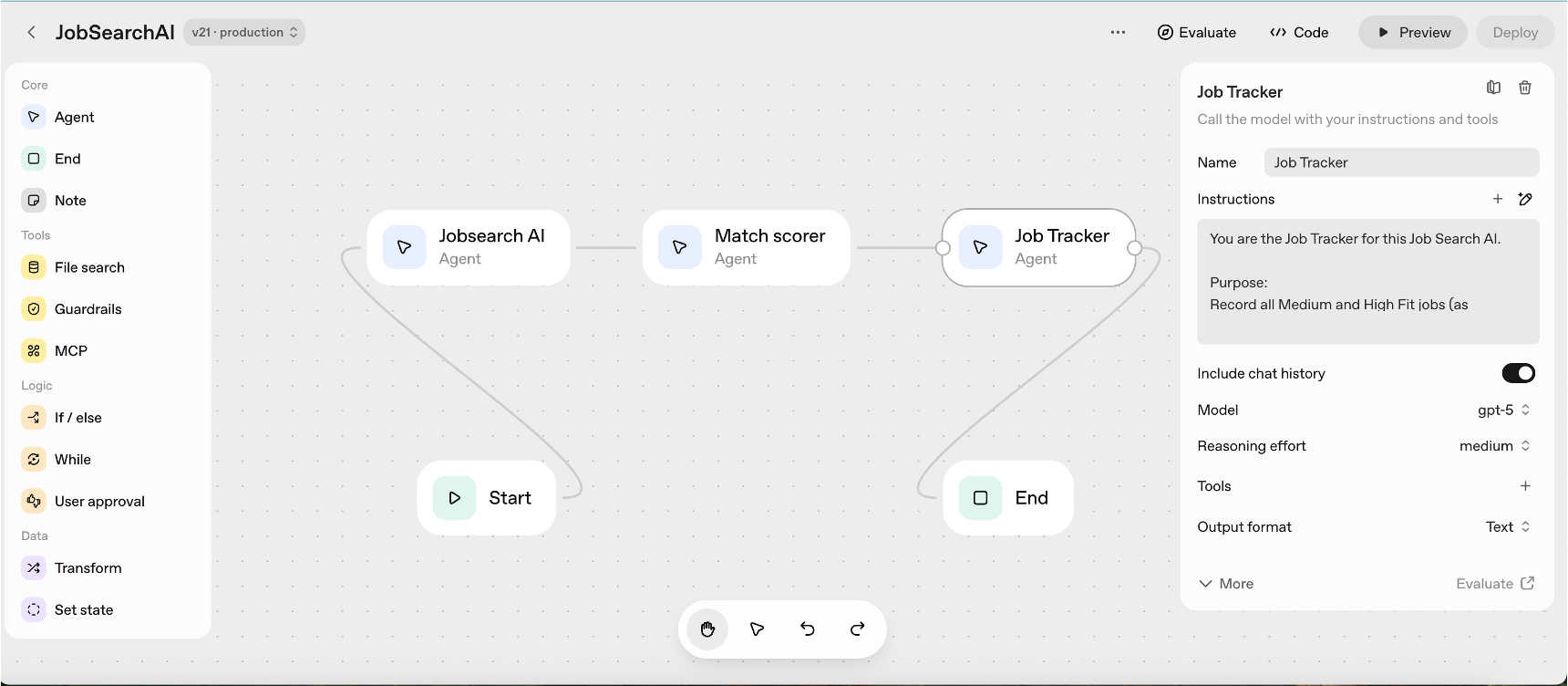

The Architecture: An Automated Data-to-Decision Pipeline

The agent was designed as a self-contained, three-stage pipeline to find, evaluate, and track job opportunities. Each stage, or node, performs a distinct function, moving from broad data gathering to highly curated, actionable intelligence.

The pipeline consists of three agents:

The Job Search Agent – The Intelligence Gathering

The first stage is responsible for sourcing raw data from the web. Its goal is to find new and relevant job listings from the public domains.

Priming the System

To establish a clear baseline, this node is provided with foundational documents via the File Search tool. These include a representative CV and a set of sample job descriptions that fit the target profile being searched. This step is critical for calibrating the agent’s understanding of a “relevant” opportunity.

Active Scanning

The agent then deploys the Web Search tool to performs open-web searches. It operates based on a set of defined parameters, such as target titles, location preferences, and recency conditions like jobs “posted in the last 10 days”.

Structured Output

The node concludes its task by structuring the gathered information, capturing key data points like job title, company, location, link, and source for each finding.

The Match Scorer Agent – The Vetting Engine

The Match Scorer Agent – The Vetting Engine

This second node serves as the pipeline’s quality assurance engine. It takes the raw data from the first stage and subjects it to a rigorous, multi-factor evaluation by comparing job details with the foundational documents.

Initial Triage

The agent first performs a high-level check, ensuring each job description includes essential terms related to function, experience level, and industry.

Relevance Scoring

For the listings that pass the triage, the agent calculates a quantitative “fit score”. A predefined threshold (e.g., a score ) is used to classify a role as “High-Fit”.

Only the “High-Fit” opportunities, along with a short explanation for the score, are passed to the final stage.

The Job Tracker Agent – Curation & Logging

The final stage is responsible for data curation and persistence. Its goal is to log all vetted and approved jobs into a structured text output.

Data Logging

The agent compiles all High- and Medium-Fit jobs identified by the Match Scorer into a text-based summary displayed in the chat output.

Structured Data

Each entry includes the Job Title, Company, Source, Location, Match Score, Date Found, and Job Link (if available).

Data Integrity

To ensure the quality of the output, the agent is also instructed to identify and skip any duplicate entries.

Run the agents

Once designed, the entire pipeline is activated with a simple, natural language prompt in a preview chat box. The agent executes its workflow, and the final, curated data is available view in the chat screen.

This project successfully demonstrates a powerful model for process automation. Beyond its immediate application, this architecture could be adapted for a range of business functions—from tracking competitor hiring trends and conducting market analysis to sourcing potential B2B sales leads.

Ultimately, this case study proves a critical point for modern leadership: the tools to build significant operational efficiencies are more accessible than ever. The imperative is for us to be hands-on, exploring these technologies to discover and implement new sources of strategic advantage.